Attack on Sybil

Brief overview of Sybil-resistance types and Humanode's approach

Precursors of Sybil-resistance

One of the most complicated and intricate topics in trying to build a robust decentralized system is Sybil-resistance. In this article I would like to go over various approaches implemented by different companies and people throughout history to try and stop the attack of clones from happening and describe Humanode’s approach.

So what is a Sybil attack? In a nutshell the attacker subverts the reputation system of a network service by creating a large number of pseudonymous identities and uses them to gain a disproportionately large influence. For example, Reddit has a reputation system called karma, where a user gets more karma points by receiving upvotes for submitted posts and comments. The premise of such a system is to improve the quality of content and limit the ability of bots to spam. This allows different subreddits to impose minimum karma requirements to post or comment anything in their threads. But as any created account has an ability to upvote content a perpetrator can easily spawn countless accounts and upvote his own content to receive necessary karma breaking the very predicament of the reputational system. Same goes for decentralized ledger technology but instead of viewing some meaningless articles and NSFW posts in the wrong places, stakes here are much higher.

First, let us go over why it is so crucial to maintain Sybil-resistance when dealing with DAOs and DLTs. Sybil attacks are really powerful when they target public and permissionless systems because in most cases these systems can’t decide upon anything without reaching a consensus. Consensus mechanisms in validation vary from one network to another, but the basic principle is quite simple - the system goes where the majority of the validation power leads it. For example, in Proof-of-Work systems validation power is divided among the participants of the network based on the power of the mining equipment they have. Spawning numerous accounts is useless because the underlying protocol cares not about the amount of peers but rather about the hashing power each peer possesses. If there is one peer that has 51% of all the hashing power against thousands of peers that cumulatively wield less than he does, then his decision outweighs all of them. This approach makes a potential takeover very costly as a malicious actor would have to spend a lot of time/effort/money to buy enough mining equipment to gain 51% that would allow him to change the system in any way he sees fit. The same goes for Proof-of-Stake based systems, but instead of mining power, PoS allocates validation according to the amount of assets staked. A malicious actor would have to buy a tremendous amount of assets to carry out an attack. Both of these approaches fall under the recurring cost type of Sybil defense but we will get to this one later.

Now imagine if a system gave out equal amounts of validation power to all peers in the system without the necessity to stake or mine anything (hinting at Humanode). It means that any potential Sybil attack could disproportionately spawn numerous peers out of thin air and without proper defense a perpetrator could easily overwhelm honest participants of the network by amassing a large number of fake identities that all wield the same validation power. Equal distribution of power among nodes seems fair and reasonable compared to concentration of power in the hands of a few through PoW and PoS, but it lacks the recurrent cost defense which creates a barrier in the form of costs that a malicious actor would have to bear to take over those systems.

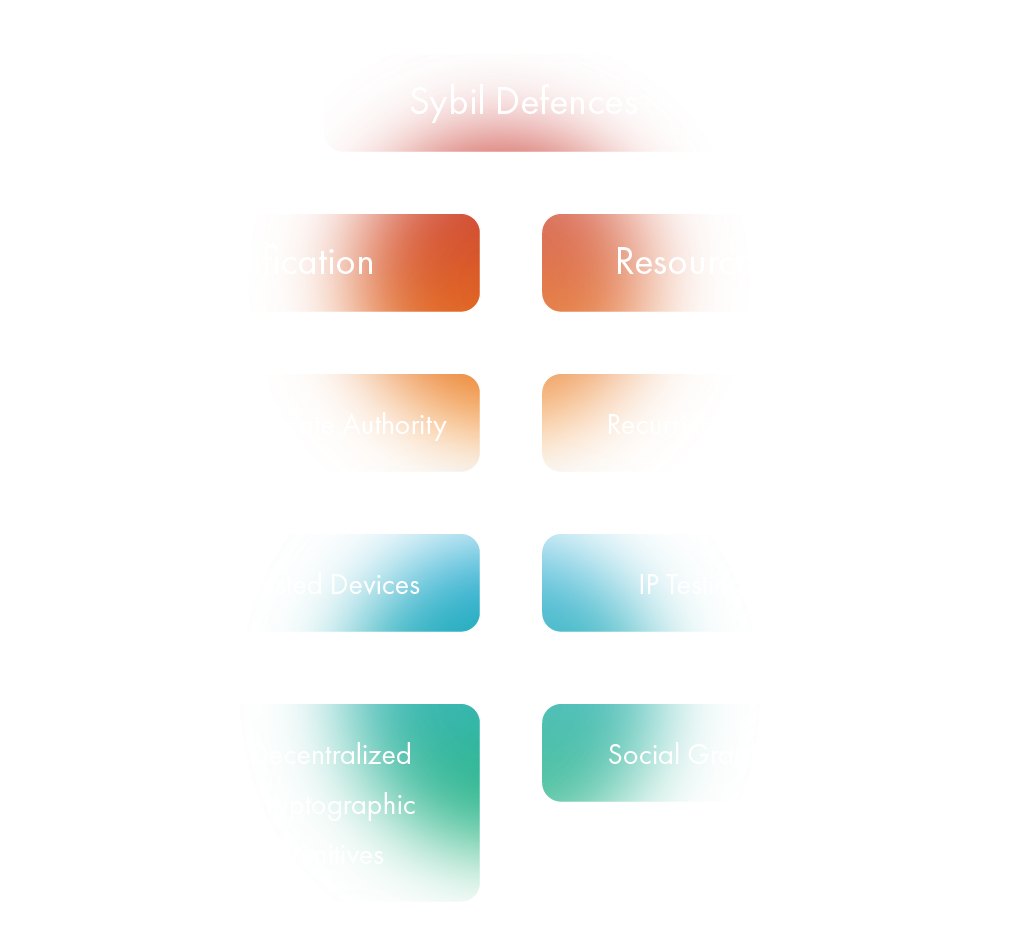

Traditional Sybil-Defenses

How do we preserve an equal distribution of validation power among peers, but be able to resist attack of the clones? To answer that question properly, let’s first go over the types of Sybil defenses that exist, to understand their advantages and shortcomings. Existing methods can be roughly divided into 6 approaches.

The ultimate goal of Sybil defense is to detect, isolate and punish Sybil identities. The only method that can potentially guarantee full Sybil-resistance is by using a certificate authority. All other methods use probabilities and heavily rely on false positives and false negatives that tolerate some Sybil nodes. So in decentralized systems, a realistic target is to minimize the false negative rate as much as possible.

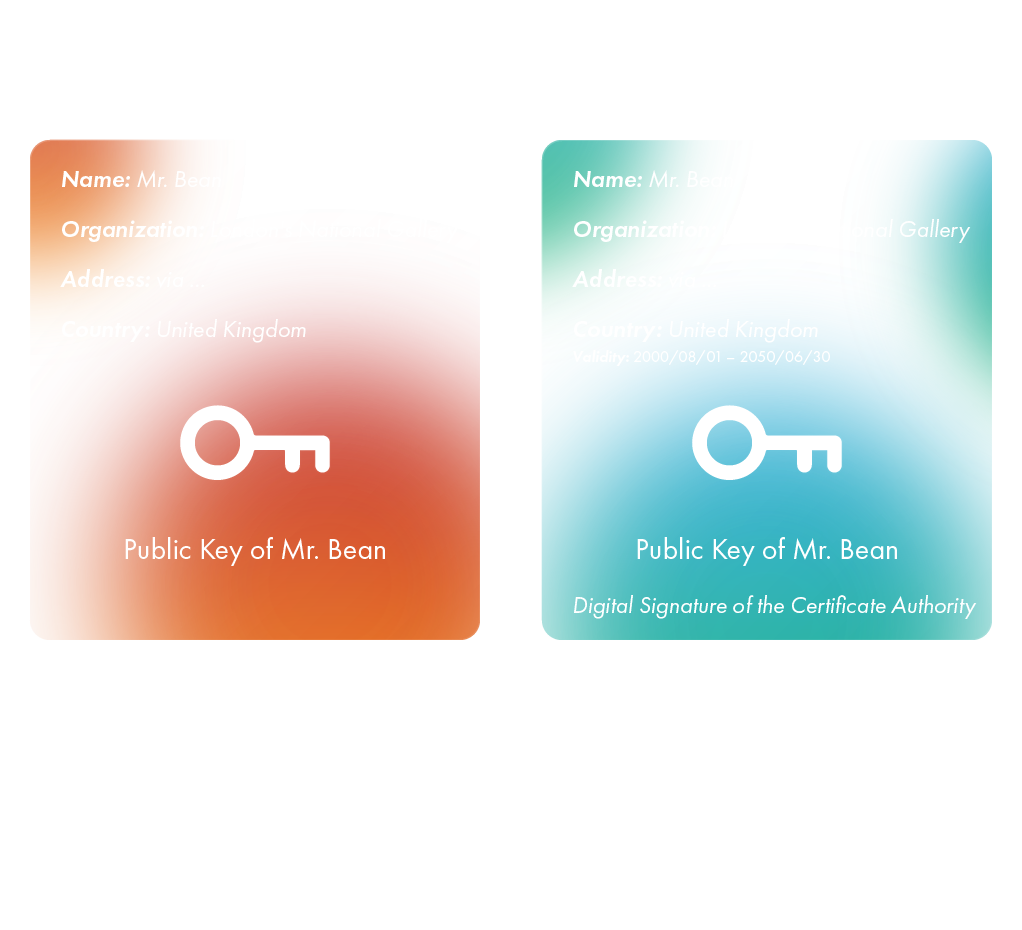

Trusted certification

Trusted certification is the most popular approach, proven to be effective many times over by various simulations, tests and real-life solutions. A third-party provider or a centralized authority is used to ensure that identities are unique by certifying the procedure by which this uniqueness was proven. This certificate proves the ownership of a public key by the named subject of the certificate. The certificate authority takes the form of a trusted third party - because it is trusted both: by the owner of the certificate and the second party, that requires to understand whether the identity is true or not, and thus heavily relies on the certification. Certification types vary, from the authorities that sign certificates used in HTTPS, to national governments issuing identity cards.

Trusted Devices

Trusted devices or trusted modules store certificates, keys, or authentication strings previously assigned to users by centralized authorities. One of the most common examples of trusted devices could be a government issued digital signature to validate the authenticity of digital messages or documents. A valid digital signature, where the prerequisites are satisfied, gives a recipient a very strong reason to believe that the message was created by a known sender (authentication), and that the message was not altered in transit.

Cryptographic primitives

While using crypto primitives, only legitimate peers are eligible for participation in the overlay. The key infrastructure is made in such a way that peers hold some kind of access ingredients to ensure that they are honest. Secret sharing or threshold signatures are used to ensure collaboration among supposedly honest users to authenticate peers. In other words, if enough nodes possessing a crypto primitive declare that a new node is legit, then it is considered to be supposedly legitimate. Some approaches also use thresholds that grant that primitive to a freshly deployed node as well, making it to be considered truly honest by other peers in the network.

Resource testing

The underlying principle in using a resource testing approach as a Sybil defense is to check if a set of identities with supposedly different users owns enough resources that match the number of identities. Resources that can be tested vary. They include computational power, bandwidth, memory, IP addresses, trust credentials, hardware specifications as well as mining power and staked amount of assets. Beside the last two, all others were proved to be ineffective due to ways to emulate resources but they can be considered to be a baseline of defense to protect from mediocre level perpetrators.

IP testing

This approach tries to determine the geographical location of the peers in the network, and match it to their activities. If the amount of activities generated in the same area is disproportionate to IP addresses, then the probability that some of these activities are due to Sybil identities rises drastically. Unfortunately, this approach is completely obsolete and ineffective, due to inaccuracy and the use of botnets.

Recurring cost

As I’ve already mentioned, imposing economic costs as artificial barriers to entry may be used to make Sybil attacks more expensive. Proof-of-Work, for example, requires a user to prove that they expended a certain amount of computational effort to solve a cryptographic puzzle. In Bitcoin and related permissionless cryptocurrencies, miners compete to append blocks to a blockchain and earn rewards roughly in proportion to the amount of computational effort they invest in a given time period. Investments in other resources such as storage or stake in existing cryptocurrency may similarly be used to impose economic costs.

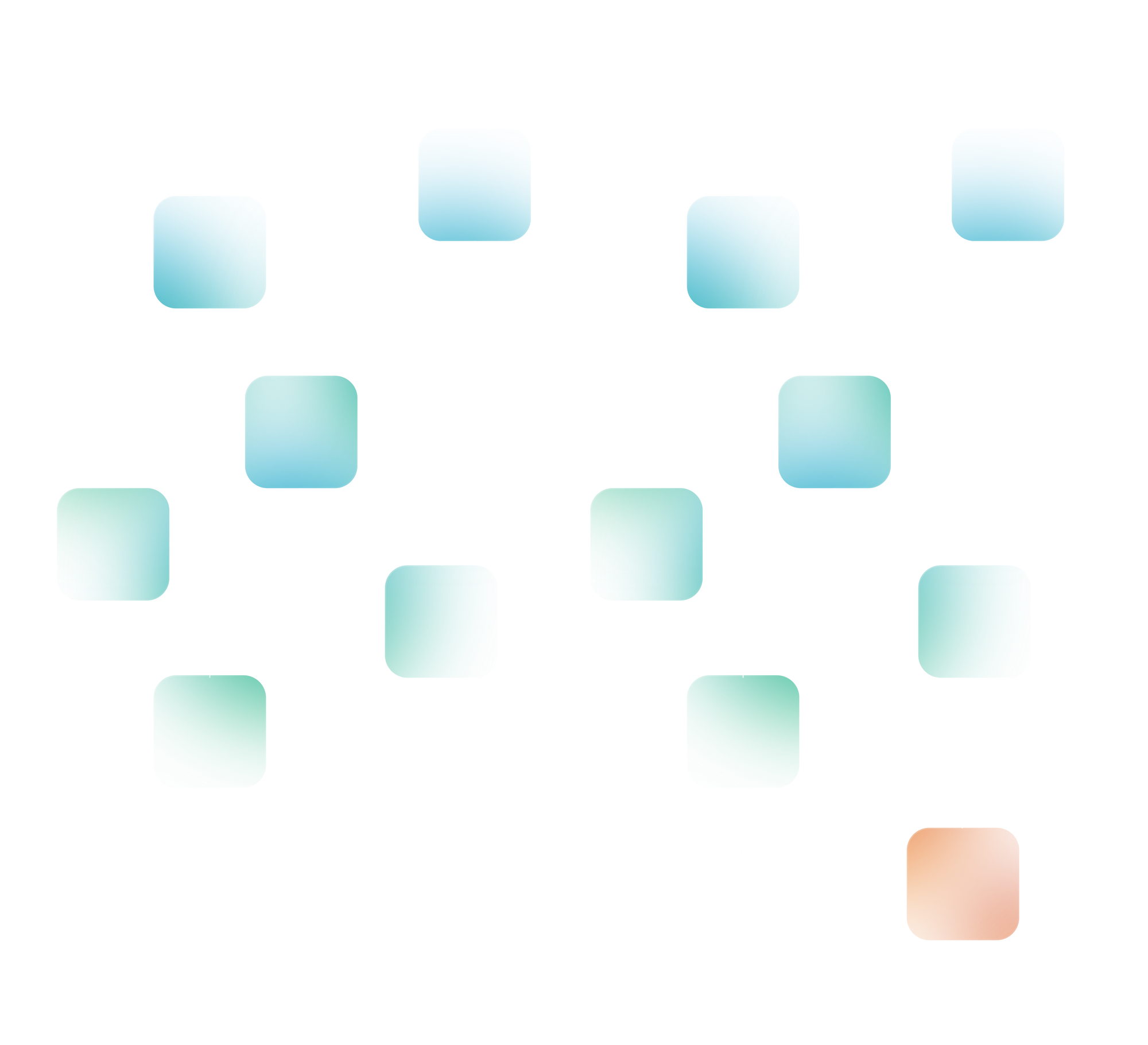

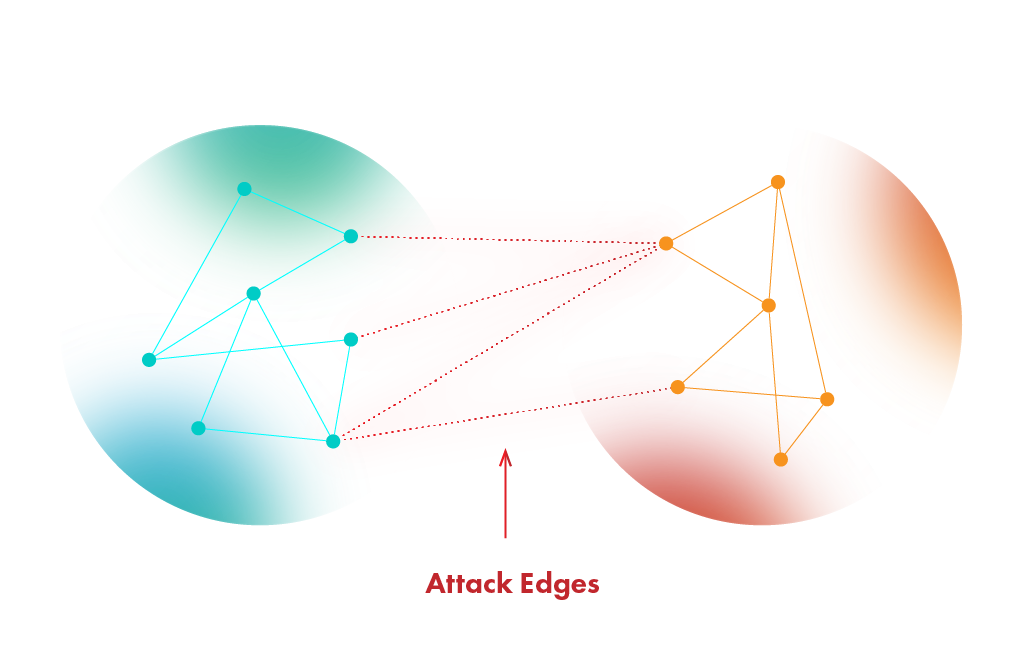

Social Graphs

Out of all methods that exist to fight Sybil in a decentralized fashion, the use of social graphs comes as close as possible to limiting the impact of Sybil nodes on the network. Social graph approaches were designed to operate without any central authority. The use of social graphs implies that there are some amount of truly honest nodes that are well-enmeshed inside the system. In a graph, nodes are connected to one another, and different regions of the network have edges through which they are connected to other regions.

When a new node is added it takes a so-called “random walk” across the other nodes in the system, commonly it is 10-15 “steps”. A step is basically writing down a public key of the node it randomly steps on.

Each node on its way becomes a witness and writes down the walker’s public key as well. As the route ends the originator receives the list of all witnesses it stepped on. When the time to check the originator comes it is considered a suspect node. Afterward, the suspect sends the public addresses it collected to the verifier node (this position can vary as there are many different approaches to social graphs, but in most cases one of the honest nodes is chosen) and compares the list of witnesses to his own list. After that three things might happen:

- If there are no intersections among the two sets then the verifier aborts and rejects the suspect

Otherwise, the verifier requests the nodes at the intersection to verify whether the suspect has a public key registered within them. Then,

- If the suspect is verified by the intersection nodes then the verifier accepts the suspect as a new node.

- If the intersection nodes don’t verify the suspect then the verifier marks it as a sibyl node.

It all might sound pretty complicated but the main goal is to limit the amount of edges between Sybil clusters of nodes and honest ones. If malicious users create too many Sybil identities the graph becomes really strange having imbalanced amounts of nodes connected to honest regions through the same edge. The whole purpose of social graphs is to limit the attack edges and through which perpetrators can attack the honest region.

Although this type of defense has been proven to be effective, it assumes that there is a region of trusted nodes that pre-exist. This means that there is a group of people that believe in one another. Original research suggests that they might be friends and know each other in real life. It means that all other nodes are propagated against the honest region or in other words a small enclave of people that trust each other making it a Proof-of-Authority based system. Another thing is that the power of identifying Sybil nodes is only guaranteed, assuming that attackers collectively control only a few links between themselves and other honest nodes in the social graphs. If a malicious actor somehow tricks the people inside the enclave and gets his node to become a part of the honest region then it’s game over. Needless to say, that it is easier to hack a small number of people or ddos them. Social graphs have their own flaws, but it is the closest that we have come to protecting systems from Sybil without recurring costs.

Proof-of-Personhood

Finally we come to PoP. For many years researchers have struggled to introduce a robust decentralized system with permissionless consensus in which each unique human participant obtains one equal unit of voting and validation power. Back in 2014 Vitalik Buterin contextualized a problem of creating a "unique identity system" for crypto, which would give each human user one anti-Sybil participation token. The first published work using the term Proof-of-Personhood was published in 2017 by Maria Borge et al., proposing an approach based on pseudonymous parties.

Since then there have been many different approaches that tried to resolve this issue.

Pseudonymous parties

Basically this is the approach proposed by Borge et al. The approach implies some kind of in-person event, where people meet in small groups to verify each other’s physical presence. The encounters themselves are conducted simultaneously at random places. There are two major drawbacks of this approach. First, some clusters can be malicious and verify other people that don't exist. Second, the inconvenience of the process for the users, as they might have conflicting responsibilities at those times.

Online Turing tests

Some solutions tried to implement Turing tests to verify unique human existence. But latest advancements in artificial intelligence and deepfake technologies have rendered this approach obsolete. Also some perpetrators force visitors of their fishing sites to solve extended CAPTCHA solutions the result of which can be retransmitted to fool the verification and spoof non-existent identities.

Social networks graphs

Another approach is basically social graphs but where nodes are unique human identities. Random walks, suspects and witnesses etc. The same flaws persist.

Strong identities

This approach implies centralized certification and requires participants to have verified identities. Basically some third-party provider conducts a KYC or biometric processing of a person and stores that data in a secured database to be able to check in the future that this person is unique and can’t create more than one node. After the verification the identity is anonymized (there are many approaches to this) and is subsequently used. There were also some propositions to create pseudonymous identities based on government-issued documents. There are obvious drawbacks to this approach. First, the database of verified identities is a critical point of failure. If breached, identities might fall into the hands of malicious actors, in other words a complete disaster might occur. Second, there is a risk that some of the potential users might be excluded due to the fact that they are not willing to go through identity verification due to privacy and surveillance concerns, or are wrongly excluded by errors in biometric tests. Third, the company provider becomes a very powerful trust layer where all participants that are verified must rely on it making it a somewhat Proof-of-Authority.

Humanode’s Approach

Humanode tries to create a system where 1 human = 1 node and nodes are equal in terms of validation and voting power. A Sybil attack is quite dangerous as there are no big recurring costs to stop a potential attacker from spawning countless nodes. To stop this from happening, the Humanode team came up with an idea that combines liveness detection certification methods with trusted devices and recurring cost mechanisms but with upgrades that make them less centralized, more secure and private.

Before reading through the solution itself, we have to keep in mind that this approach is reasonable only for human nodes (validators) because we should be able to stop bad actors from spoofing the validating nodes. The UX for ordinary users is much lighter.

Private Biometric Identities with Liveness detection

This approach combines Fully Homomorphically Encrypted biometric processing with liveness detection certification.

FHEd embedded templates

This part I’ve borrowed from another article that I’ve written where I went over Humanode in simple terms. You can check it out here.

Let’s go over some biometrics 101 that occur when you are using facial recognition. When you use biometrics on your device the camera takes several photos of your face, choses the best image and puts floating points on the face (small dots around distinct characteristics of human beings such as eyebrows, nose, mouth, chin etc. commonly around 70 points are used to capture the face).

Every one of us besides identical twins has unique facial traits that’s why every floating-point face map is different from one another. Floating-points are used to crop and normalize the image and afterwards this map is inserted into a Deep Neural Network (DNN).

The DNN transforms your face into 128 numbers that represent your facial traits as coordinates on a 128-dimensional vector space – that is called an embedded template and it is used as your personal key to sign into apps etc. After the embedded template is ready your original photographs and the floating-point map are deleted.

Embedded templates, if stolen could be fed into another neural network, trained to recover the original face features, meaning that if some perpetrator gets their hands on them, they can revert it to an image that closely resembles the original face.

Now losing your password is one thing because you can easily change it but losing your face kinda seems scary. That is where Fully Homomorphic Encryption comes in hand. What happens is that we add an additional step right after we get the template. We use one of many encryption algorithms to encrypt the embedded template and then we erase it along with everything else and only operate with so-called “ciphertext” (text generated through encryption) to conduct any operations (registration/log in/signing transactions, etc).

Even if the perpetrator manages to get it somehow, he won’t be able to revert it back to the original state even closely. Not only that but the system that operates the ciphertext won’t be able to tell who you are. Basically, FHE provides the means for your biometrics to be private and solely belong to you, while allowing you to match the faces and provide authorization.

This approach was vastly tested out and practically implemented and tested out in a paper called “HERS: Homomorphically Encrypted Representation Search” by Engelsma et al. in 2020 and theoretically laid out and tested by Bodetti in 2018.

Liveness Detection

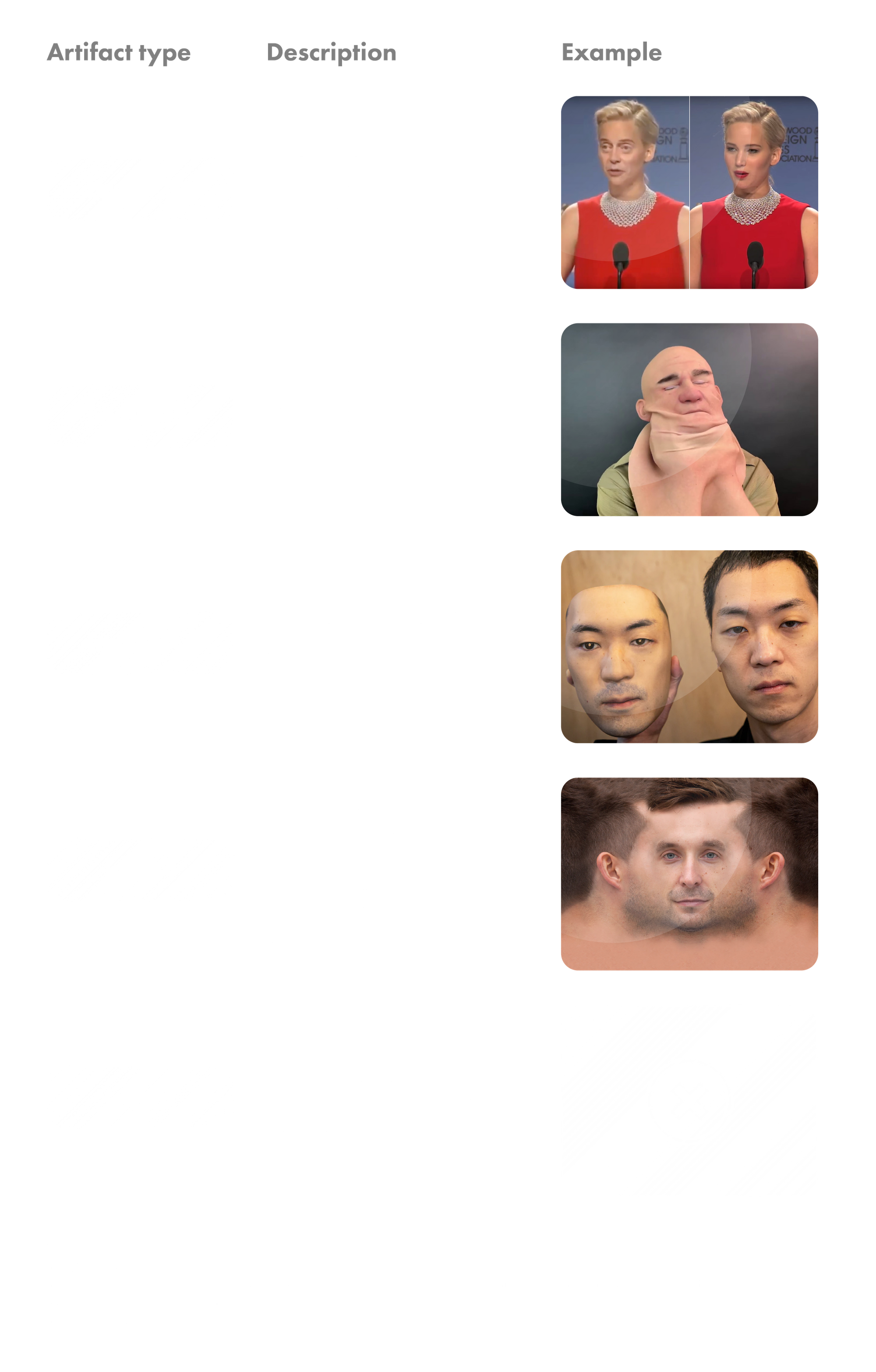

In biometrics, Liveness Detection is an AI computer system’s ability to determine that it is interfacing with a physically present human being and not an inanimate spoof artifact.

Almost seventy years ago Alan Turing developed the test which measures a computer's ability to act as a human being. Liveness Detection is an AI that determines if a computer is interacting with a live human. The people that deal with consensus mechanisms would call it proof-of-personhood through biometric means.

A non-living object that exhibits human traits is called an “artifact”. The goal of the artifact is to fool biometric sensors into believing that they are interacting with a real human being instead of an artificial copycat. When an artifact tries to bypass a biometric sensor, it's called a "spoof." Artifacts include photos, videos, masks, deepfakes and many other sophisticated methods of fooling the AI. Another method of trying to bypass the sensors is by trying to insert already captured data into a system directly without camera interaction. The latter is referred to as “bypass”.

In the biometric authentication process, liveness data should be valid only for a set period of time (can be up to several minutes) and then is deleted. As this data is not stored, it can’t be used to spoof liveness detection with corresponding artefacts to try and bypass the system.

The security of liveness detection is really dependent on the size of data they are able to detect. That is why low resolution cameras might never be totally secure. For example if we take a low-res camera and put a 4k monitor in front of it then weak liveness detection methods such as turning your head, blinking, smiling, speaking random words etc. can be easily emulated to fool the system.

In 2017, the International Organization of Standardization (ISO) published ISO/IEC 30107-3:2017 standard for presentation attacks went over ways to stop artifacts such as high-resolution photos, commercially available lifelike dolls, silicone 3D masks etc. from spoofing fake identities. Since then, sanctioned PAD tests for biometric authentication solutions have been created so that any new solutions meet the specified requirements before hitting the market. The most famous of them all is the iBeta PAD Test. It is a strict and thorough evaluation of biometric processing solutions in order to understand whether they can withstand the most intense presentation attacks. Four years have passed since then and this standard is condemned as outdated by many specialists in the field, and iBeta PAD tests have gradually become easy to pass with modern sophisticated spoofing methods.

FaceTec, one of the leading companies in liveness detection, divides attacks into 5 categories that go way beyond those stated in the 30107-3:2017 standard and represent the real world threats much precisely.

The goal of Humanode is to upgrade and implement (or eider build on) a solution that satisfies the security requirements that mitigate all levels of Sybil mentioned above as they go way beyond those mentioned in the latest standard issue and much more realistically represent the threats that exist out there in the real world.

At first Humanode is to implement an already working solution that would be able to tell apart humans and artifacts and certify that it is indeed a real human being, and not a "spoof" that is participating in the enrollment session. But the end goal is to create a native decentralized liveness detection protocol that would satisfy the security requirements and be decentralized in terms of ownership, meaning that it will equally belong to every validator in the network. This way, in time, we will be able to overcome centralization in terms of liveness certification. But for now, any solution that safeguards the network from Sybil attacks, should do just fine and give the ability to create a robust Sybil-resistant system.

Trusted Devices

Although liveness detection protocols are powerful tools to fight Sybil-resistance, they are always limited by the device they reside on. As mentioned above, even a state-of-art liveness can be fooled by level 2 - 3 presentation attacks if the resolution of the device they run on is low. In order to strengthen the Sybil-resistance, the trusted devices containing liveness detection protocols must be deployed. A custom biometric device that contains two high-resolution cameras and additional IR (Infra-Red) sensor would enable the use of much broader multi-modality in terms of biometric processing as well as increasing the depth and quality of data for the liveness protocol to be as efficient as possible. Moreover, a private key can be injected into the custom device, like into any digital camera out there, so that the snapshots coming from the device are signed with it. Even if you hack the device and try to bypass the sensors by feeding fake data into the system it would be useless because we can limit the amount of identities created from such a device to any number. We are thinking one or two identities max. Another thing is that the software can’t really tell if it is being run on a virtual machine or real computer, and that is why without a special device it is not possible to imply a defense where we can limit the amount of identities created from a device. A price tag for such a device would not be higher than 200$ at the beginning, and with constant optimization of hardware and production methods it will gradually drop, increasing the accessibility to a larger pool of people around the globe.

Recurring cost

Beside biometrics, Humanode utilizes recurring costs to keep sybil at bay. There is a pretty easy equation that corresponds to the recurring cost of a potential attack. Imagine there are 10 000 validators in the network. To halt the network one should amass 33% +1 of all identities that exist or 3334 identities out of 10 000.

Where,

Liveness FAR - Liveness False Acceptance Rate meaning the probability of the live detection protocol admitting an artifact instead of a real human being;

PSC Initiation - Private Smart Contract initiation cost for decentralized key management, biometric score decryption. As Humanode uses Secret Smart contract layer this is basically costs to utilize their private smart contracts;

FHE Matching - Fully Homomorphically Encrypted search and matching operations run on an encrypted database in IPFS;

LivDet - Liveness detection certification costs;

Testnet Validation - the costs run by validators during 2 weeks before being admitted to the mainnet;

Biometric Device Price - the cost of device required to conduct proper biometric processing to become a validator;

Number of Validators - number of validating nodes in the system.

Our prediction is that with the evolution of different technological fields, the False Acceptance Rate and costs should drastically decrease. The more human nodes there are, the more it costs for a potential bad actor to spoof enough identities to halt the system, and even more to try and reach 66% + 1 to overtake it.

Human node admission protocol

In order to fully understand this section, I suggest that you read through the section that covers the Vortex in the Humanode whitepaper.

I wouldn’t call this one a proper Sybil defense, but rather a way to make the life of potential bad actors much harder. There are two types of nodes in Humanode: validator and relay. The validator nodes store the full state of the network, and conduct all of the required computations. The number of validator nodes is defined by the DAO and is technically limited by the specs of Epoch-CRDT. We plan to start with 10 000 validating nodes, gradually increasing the number as the system grows and becomes more stable and secure. The relay nodes hold data that increases the speed of the network by relaying broadcasts and holding a part of the state. The number of relay nodes that can be created is limitless, but these nodes do not really participate in consensus.

There are several characteristics that affect who becomes a validation node and are based on Proof-of-Time (PoT) and Proof-of-Devotion(PoD) utilized in Humanode DAO we call Vortex. The most eligible nodes are those who have spent more time and have proven their dedication to network more times than the others. There are two ways to prove one’s devotion: either by participating in one of the projects of the ecosystems approved by Vortex and assembled in Formation or by having one of his proposals approved by the Vortex. For example, a node which has proven its dedication many times over and has been governing and running for a long time will be much more eligible to become a validation node than any other. Now if a perpetrator somehow manages to spoof some nodes he would have to wait until all other nodes that had been there longer than he had and had proven their dedication in some form to become a validator. In our PoD and PoT systems PoD has precedence. Meaning that if there is a node that has been running for 2 years but without PoD and a node that has been running for a year but with PoD, the later would be more eligible than the first one. Moreover, as mentioned above, the number of validating nodes will be increased in batches. The more there are nodes and the more there are validators that pass Proof-of-Dedication, the more identities a bad actor will have to spoof to conduct an attack increasing his recurring cost.

Proof-of-Existence

Proof-of-Personhood can be divided in two separate approaches: Proof-of-Uniqueness (PoU) and Proof-of-Existence (PoE). One is to prove that someone is a unique human being, the other is to prove that someone exists. Proof-of-Existence is applied through making the human nodes go through biometric processing every month. If a human node fails to prove its existence through biometrics, then its node will be unplugged and separated from the network until they prove to exist once more. So a potential perpetrator would have to keep up with updating and evolving liveness detection protocols each month. Combining PoE with human node admission protocol creates an additional layer of defense against potential Sybil and limits the influence they can amass even if they somehow spoof identities that don’t exist.

Defense on Sybil

Sybil-resistance is always a race. Perpetrators get more sophisticated by the day and the defenders should be constantly on guard building up the defenses of the same level of intricacy. Humanode combines state-of-art liveness detection protocols, FHEd multimodal biometric processing with constant proof-of-existence, special validator admission protocols, trusted devices and recurring costs to create a robust Sybil-resistance that would safeguard the system from spoofs and bypasses committed by bad actors. A big part of Sybil defense is in the hands of human nodes themselves - the more there are human nodes that actively govern and participate in the life of the network, the stronger the resistance as Sybil identities become less eligible to become validator nodes. Sybil-resistance plays a major part in the existence of the Humanode network, and that is why constant research and development is conducted by Humanode Core to upgrade the defenses. I hope that this article has shed some light on how we are going to fight Sybil nodes. Thank you for reading through.

Wish you a smooth Sybil-resistant rest of the day!

Sources:

The Sybil Attacks and Defenses: A Survey by David Mohaisen and Joongheon Kim, 2013

DeepFace: Closing the Gap to Human-Level Performance in Face Verification by Yaniv Taigman, Ming Yang, Marc’Aurelio Ranzato and Lior Wolf, Facebook AI Research, 2014

ZoOm® 3D Face Matching Self-Certification Report by Josh Rose, John Bernard and Jase Kurasz, 2019

Secure Face Matching Using Fully Homomorphic Encryption by Vishnu Naresh Boddeti, 2018

HERS: Homomorphically Encrypted Representation Search by Joshua J. Engelsma, Anil K. Jain, and Vishnu Naresh Boddeti, 2020

Homomorphic string search with constant multiplicative depth by Charlotte Bonte and Ilia Iliashenko, 2020

Secure Search via Multi-Ring Fully Homomorphic Encryption, Adi Akavia, Dan Feldman, and Hayim Shaul, 2018

Why I Love Biometrics It’s “liveness,” not secrecy, that counts, by Dorothy E. Denning

SybilGuard: defending against sybil attacks via social networks by Haifeng Yu, Michael Kaminsky, Phillip B. Gibbons and Abraham D. Flaxman, 2006

Proof-of-Personhood: Redemocratizing Permissionless Cryptocurrencies by Maria Borge, Eleftherios Kokoris-Kogias, Philipp Jovanovic, Linus Gasser, Nicolas Gailly, Bryan Ford, 2017

Who Watches the Watchmen? A Review of Subjective Approaches for Sybil-resistance in Proof of Personhood Protocols by Divya Siddarth, Sergey Ivliev, Santiago Siri, Paula Berman, 2020

ISO/IEC 30107-3:2017 standard by International Organization for Standardization, 2017

https://dev.facetec.com/

https://liveness.com/