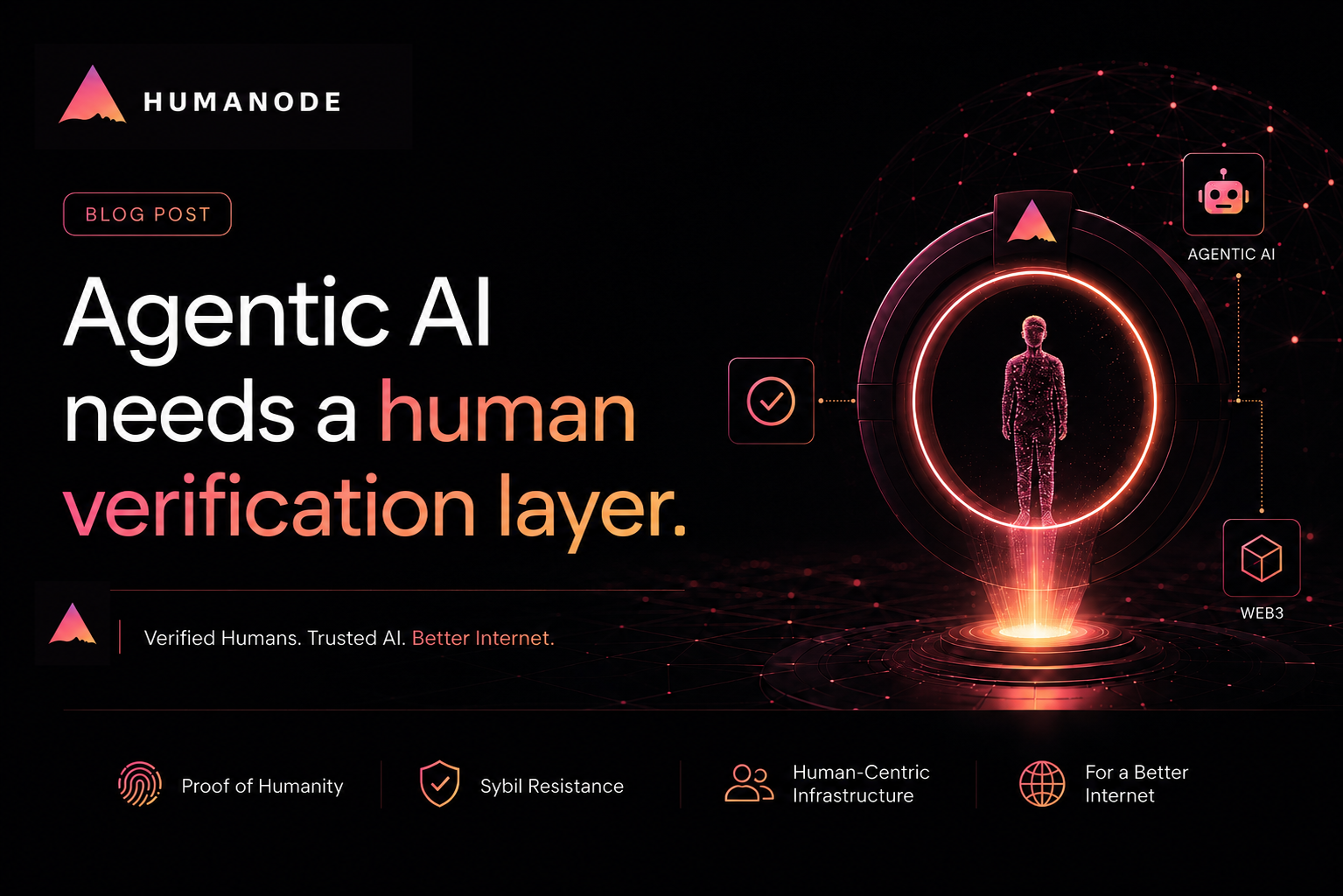

Agentic AI needs a human verification layer. And Web3 hasn't built it yet.

Most people still think of AI as something you chat with. You ask a question, it answers, and sometimes it confidently says something stupid enough to cost you money.

But that version of AI is already outdated. The newer version, called agentic AI, is much more capable but much harder to contain. It can use tools, call APIs, read files, and move through a task on behalf of a user.

In Web3, especially, that shift gets serious quickly because agents can now connect to wallets, smart accounts, payment systems, and smart contracts.A bad answer can be annoying, but you can usually work around it. A bad transaction, on the other hand, can cost real money.

The interesting part is not whether agents are useful. They definitely are. However, the real sticking point is what happens when these agents start getting access to things that matter.

AI agents need access

At first, it feels like an AI agent is mostly helping around the edges. It writes a message, explains a topic, summarizes a proposal, or helps you think through the next step. But everything changes once the agent gets access permissions.

That access could be: to a wallet, an API, a smart account, a dashboard, a database, an inbox, a community, or a reward system. Once that access is granted, the agent can start doing parts of the task itself.

This autonomous working is already visible in Web3 tooling. Coinbase’s AgentKit is described as a toolkit that enables AI agents to interact with blockchain networks using secure wallet management and onchain features.

For the agentic economy to overtake the human economy, agents need a way to discover services.

— Brian Armstrong (@brian_armstrong) April 20, 2026

We launched Agentic(.)market to give agents a discovery layer to find and integrate x402 services seamlessly.

Add the skill to your agent. And list your services to start earning… https://t.co/xvxcpGl6El

For builders, this turns the design question into a practical one. We get that useful agents demand access. A payment agent requires a way to move value. A DAO assistant needs proposal data. A quest or rewards agent has to check user activity. A trading agent wants wallet permissions.

But the actual design question is: how much access they should get, what guardrails should sit around that access, and who should be recognized as the real human behind the permission.

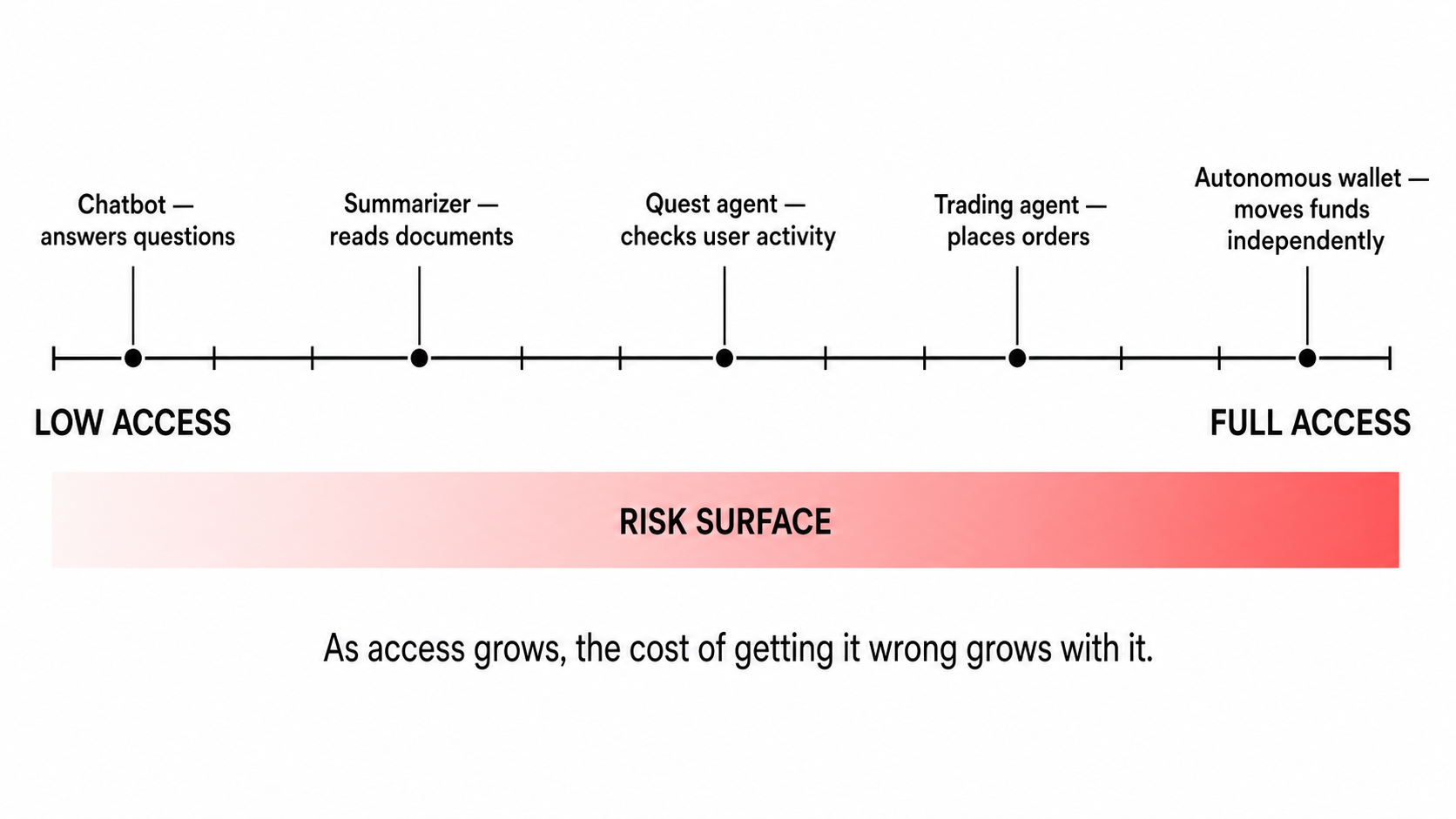

Access creates the problem

The more an agent can see and do, the more useful it becomes. However, as access grows, the risk grows with it.

That’s why developers now talk less about chatbot design and more about access control. Things like scoped permissions, spending limits, session keys, contract allowlists, revocation, audit logs, and human approval are all part of product design.

Even the security teams are already treating this concern seriously. OWASP’s LLM risk work includes sensitive information disclosure and insecure plugin design, where weak access control around tools can lead to serious problems.

Prompt injection tops the OWASP LLM Top 10 and there's no single fix.

— Alex Xu (@alexxubyte) April 29, 2026

Instead, you stack defenses, each one catching what the others miss.

Defenses come in two families: model-level and system-level.

Model-level defenses teach the model to resist injection.

- Spotlighting… pic.twitter.com/hpA9ldcl7b

For systems with agents, access shouldn’t feel unlimited. Products need clear rules about what the agent can do, when it can do it, and who is responsible: the agent, its creator, or the platform that gave access.

The stakes shift quickly when the money is involved.

Where things can break

The first serious pressure point is the wallet and payment access.

Once an agent can interact with a wallet, account, payment system, or smart contract, a simple mistake can turn into a financial disaster. It may approve the wrong transaction, interact with a risky contract, repeat a payment, or move outside the user’s intended limits.

Chainlink has already pointed to rogue agents making unauthorized transactions or entering infinite spending loops as a serious concern for agent payments.

The same access problem shows up with private data.

An agent might need access to your files, inboxes, dashboards, customer records, community tools, or internal databases to do its job. If boundaries are too loose, it could expose information that was only meant for a specific task.

After that comes accountability.

If an agent causes harm, there still needs to be a real person, team, or organization behind the permission—someone who created the agent, gave it access, set its limits, or benefited from its actions.

In Web3, the same pattern spreads into governance, apps, communities, and rewards.

Agents can help people read proposals, compare arguments, and understand votes. AI can help people make sense of governance, which is useful. But things get complicated when one person pretends to be public opinion.

The risk increases when agents create fake support, comments, opposition, and participation across many accounts.

The same pattern can appear in apps. Agent swarms can create fake users, fake engagement, fake reputation, and fake social proof.

Similarly, for airdrops, points programs, quests, referrals, allowlists, and game rewards, agents can manage accounts, complete tasks, and make one operator look like many users.

For those of us building in Web3, fake scale is already familiar.A wallet farm can look like a user base. Quest farming can look like product usage. Fake engagement can look like community growth. Coordinated accounts can make governance support look stronger than it really is.

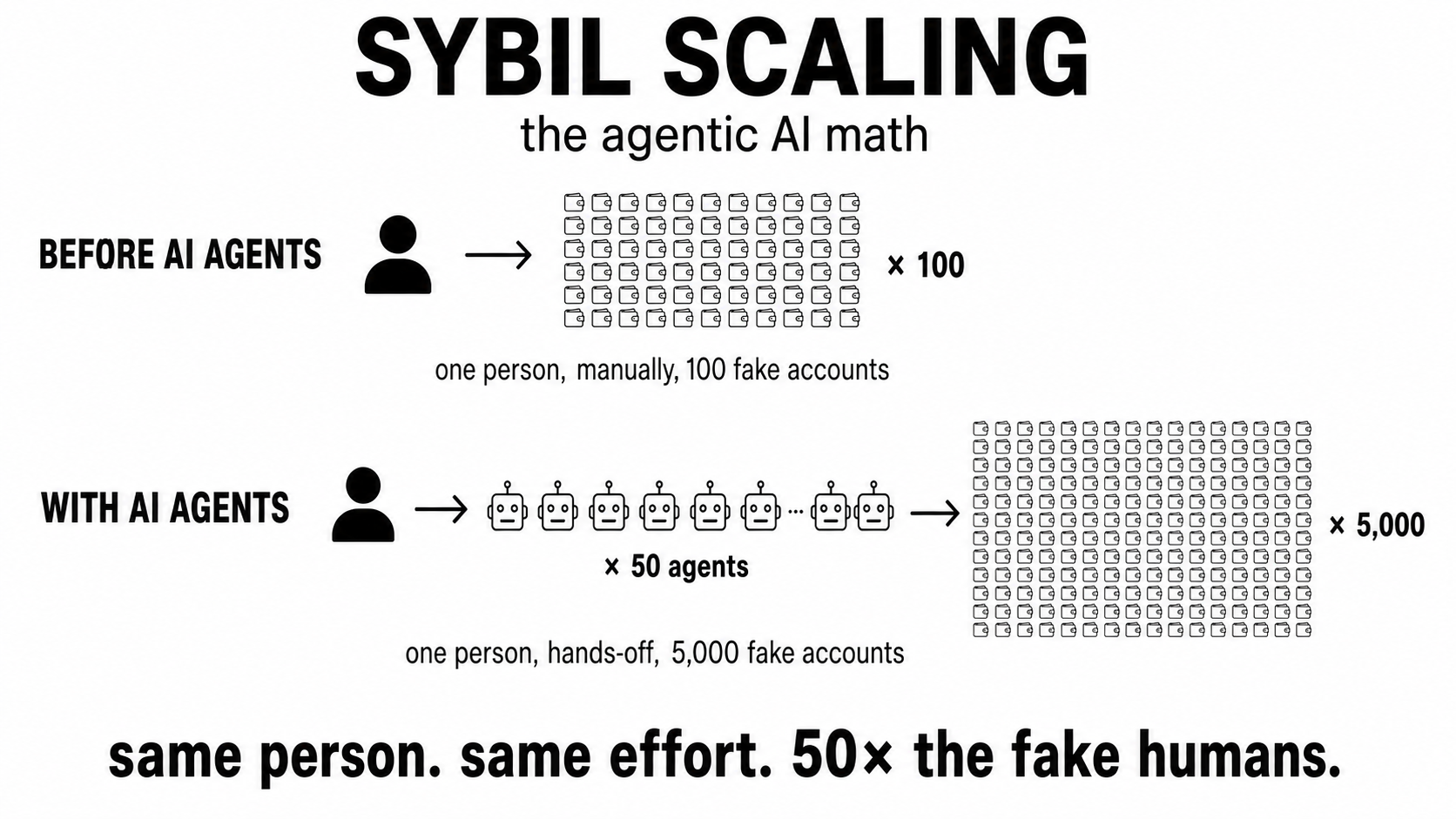

AI agents make this even easier because they add automation, context, and decision-making to the old Sybil tactics. One person can run many agents. Those agents can manage lots of accounts, and those accounts can create activity that looks human from the outside.

Sybil farming used to scale linearly. Agents made it exponential.

We believe it is in the protocol's best interest to distribute tokens to durable users — not sybil farmers.

— LayerZero (@LayerZero_Core) May 3, 2024

If you are a sybil, you have two options:

– Self-report sybil addresses for 15% of your intended allocation. No questions asked. The deadline to do so is May 17th.

– Do… pic.twitter.com/Kme9ZKckC7

The damage isn't theoretical. LayerZero's June 2024 airdrop disqualified 803,000 wallets, about 13% of the eligible base, using community reports plus on-chain analysis from Chaos Labs and Nansen.

That was 2024, before agentic AI was deployable at scale. The defense ran on humans manually flagging humans. The next round won't have that luxury.

So the issue is not only that agents may make mistakes. The bigger problem is that agentic systems make fake participation easier to create and harder to spot.

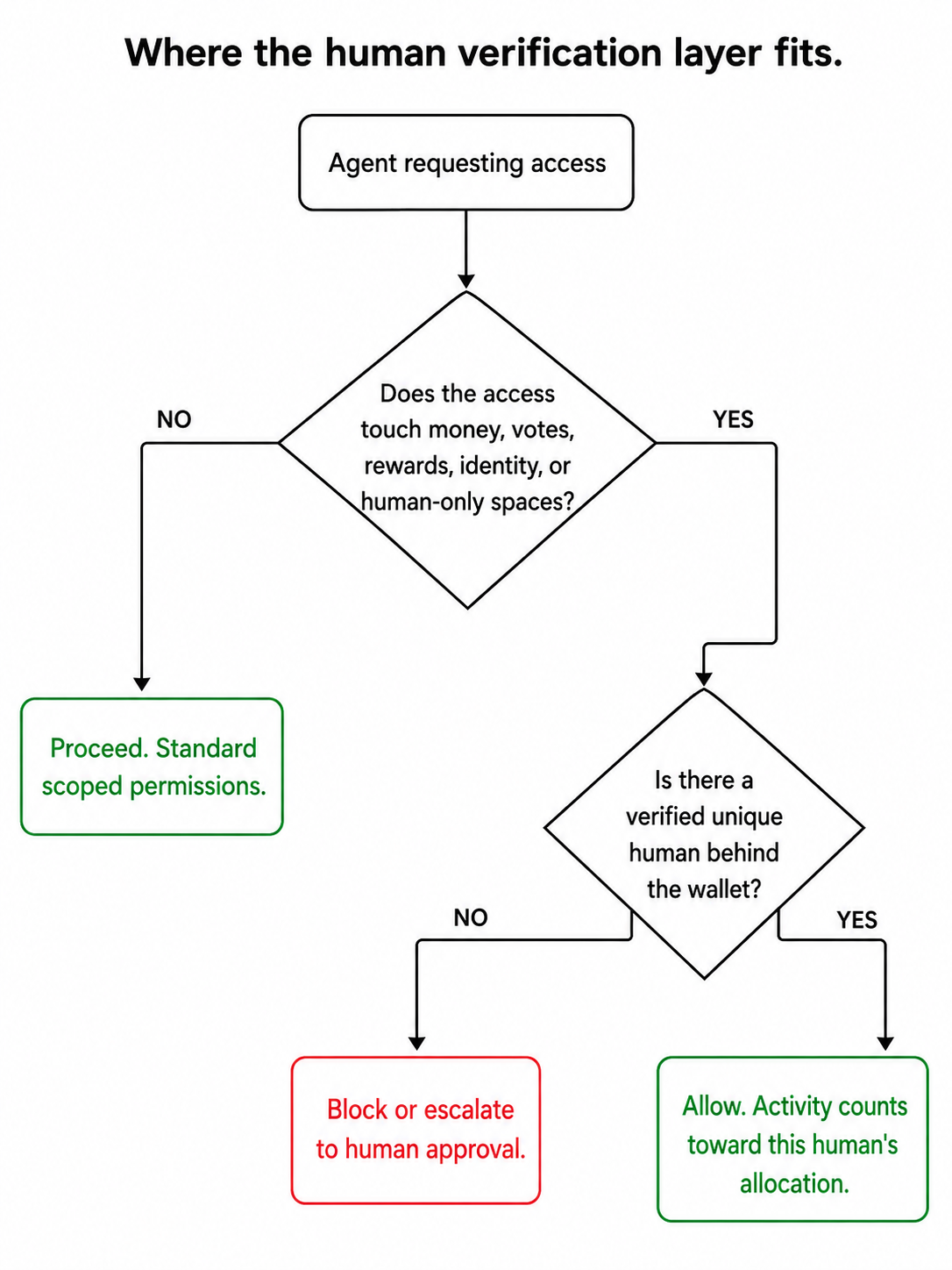

That’s why having a human layer matters when a system gives access, rewards, reputation, or voting power to users.

Apps need a way to tell when activity comes from real, unique people and when it’s just automation pretending to be users.

Proof of Humanity (PoH) as human-backed access

A human check, in this context, is less about the agent itself and more about the permission behind it. Before an agent receives access to a wallet, reward system, governance flow, or human-only space, the system should be able to ask one simple question:

Is there one real, unique human behind this account, wallet, or permission?

But that is only the practical side of Proof of Humanity. For an agent wallet, it could mean certain permissions only activate when the wallet is linked to a unique human. For a rewards platform, it could mean a quest, an airdrop, or a points campaign that counts only activity from wallets backed by real people. For a DAO, it could mean AI helps users understand proposals while voting rights remain tied to accounts backed by a real human.

Overall, PoH works best when it quietly sits in the access layer. It answers one question, is there a human here?, without becoming an identity check.

Where Humanode fits in this

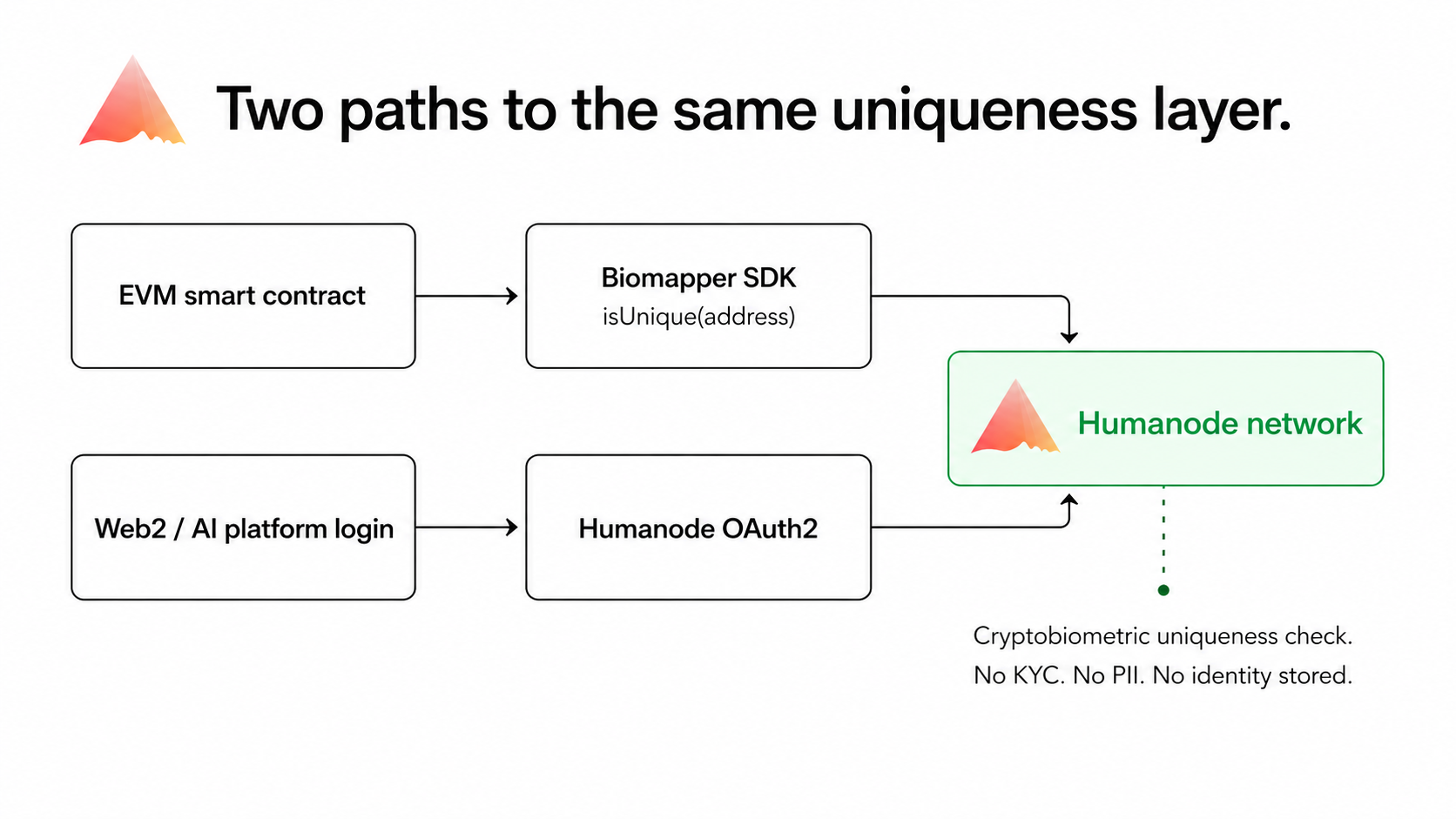

Apps should be able to check if a wallet or account belongs to a unique human, without asking for the user’s full identity when they join, claim a reward, or activate a permission.

And that is precisely what Humanode is built for.

At its core, Humanode is a cryptobiometric network that proves human uniqueness. It lets systems check if there’s a real, unique person behind an account or wallet without turning the user into an identity file.

From there, the right Humanode product depends on what the app developer is working with.

For onchain agents that hold wallets and call contracts, Biomapper checks uniqueness in a single Solidity import; no KYC, no identity, works on any EVM chain.

For Web2 platforms and AI tools where access begins at login, Humanode OAuth2 adds the same uniqueness check at the auth layer.

The idea behind these products is simple: check for a unique human wherever a fake scale could harm access, rewards, reputation, governance, or community trust.

Simply put, the goal is to make sure there’s a real person behind every AI agent on your platform.

Summing Up

The main point is that AI agents need access to be useful. The goal is to make that access safer and easier to trust.

Some of that can be done with technical guardrails like scoped permissions, spending limits, session keys, contract allowlists, audit logs, revocation, and human approval for sensitive actions.

Another part comes from the human layer.

When an agent is about to access money, rewards, governance, reputation, or human-only access, the system should verify that a real, unique human holds that permission.

For AI agent developers, agent wallets, reward systems, DAO tools, or Web3 communities, a human check can be part of the agent access stack before fake scale, financial losses, identity theft, or misuse of private data become part of the product.

Agentic AI isn’t waiting for Web3 to figure this out. Either we leave agentic AI uncontrolled, or we add a human layer to ensure there’s a unique human behind it who takes responsibility for AI actions.

And with Humanode, that human layer doesn’t have to be identity information or KYC; it’s simply a check that there’s a unique human behind the account.

More on Humanode and its human uniqueness layer on the official website: https://humanode.io